5 Things to Consider About Data Collection in AAC

As a rule, SLPs are pretty good about collecting data in their clinical work. Here are some of our prAACtical thoughts about data collection.

1. Don’t bite off more than you can chew. We’ve visited several programs where the client data filled a huge 3-ring binder. In some places, they logged the data daily, reviewed it frequently, and actually USED it to make programmatic decisions. If that works for you, great! But most programs only reviewed the data when they had to report it or prior to a visit by someone who might want to see and discuss those data. In those cases, the data really wasn’t serving it’s original purpose: to see how instruction might need to be tweaked for a client who was learning quickly, slowly, or not at all. The takeaway: Don’t collect more data than you’re prepared to review and put to use.

2. We should be tracking meaningful things. I love this social worker’s ideas for data collection at recess. She tracks things like the percent of time a child is engaged in play with others, the number of times they need help resolving conflict, and the number of times they are sought after as a play partner (as compared to how many times they seek out others). Now, that’s prAACtical!

3. You don’t have to collect data at every session. For my clients with semantic goals, for example, some sessions are geared to instruction. In those sessions, we provide focused language stimulation, explicit teaching, and lots of practice in different activities. We often don’t take data on those words until a future session. That’s okay. It makes sense not to take data on a word meaning after you just taught and practiced it, right? Don’t slip into autopilot and take data every session just because that’s the way we’re used to doing it. Take data when it makes sense to do so.

4. Don’t use data to make decisions until you’re confident that the data are accurate. We often assume that the data are correct, but it’s a good idea to check, particularly if you are a new at this. And you know what? Taking data on some of these skills isn’t as easy as you might think. Last month we were working with some beginning communicators who had goals like getting attention and signalling for more. Sounds simple, right? Well, it wasn’t. One person counted only communicative acts that used picture symbols. Another counted vocalizations and touches. Who was right? They both were because we hadn’t clearly defined what constitutes a request for attention or recurrence. Once we did that and everyone was looking for the same thing, we were able to get usable data.

Inter-rater reliability isn’t just for research. We often do this with our student clinicians at the start of a new semester, because unless the data are accurate it doesn’t represent the client’s actual performance. Any if we don’t have a clear picture of the client’s current level of performance, then setting a target for future achievement isn’t very meaningful.

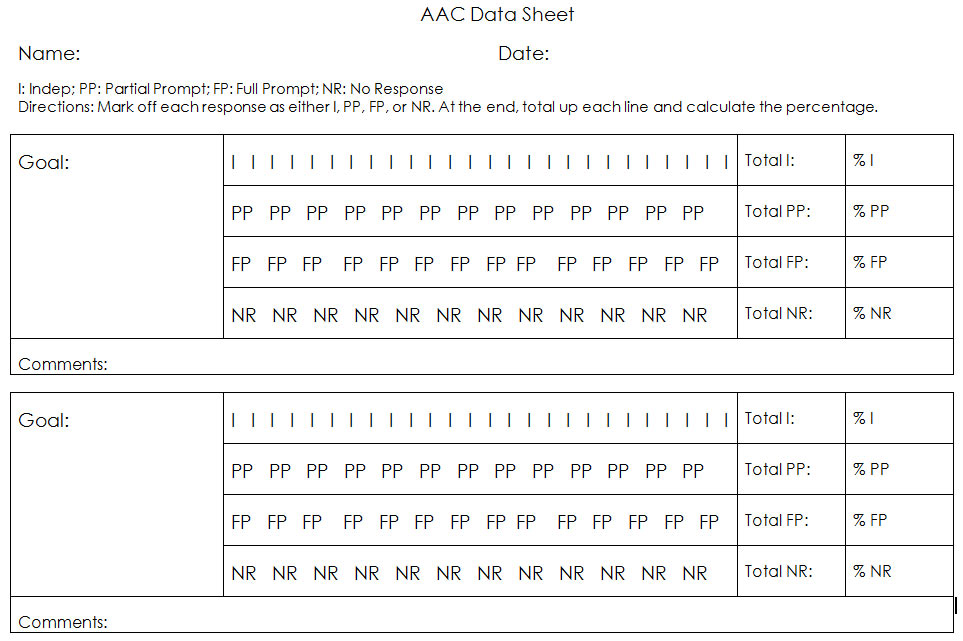

5. The easier it is to record the data, the more likely we are to be accurate. I’m a huge fan of taking care of this with some “up front” work. If we spend some time planning and developing forms that fit our setting, goals, and system of prompts, then we are more likely to use those and get reliable data. Like everyone else, we’ll jot notes on scrap paper, make hash marks on our hands, or scribble some hieroglyphics on a strip of masking tape from time to time. But on a good day, we try to go into the session with a pre-prepared data sheet like this one. It’s a little different than most but I find it easy to use during a busy session. To use it, cross out I if the client’s response was independent, PP if a partial prompt was needed, FP if a full prompt was needed, or NR if there was no response. At the end, tally up number of each one and divide by the total number of responses. Then convert to a percentage. Easy peasy.

Filed under: PrAACtical Thinking

Tagged With: assessment, data, data-based decisions, download, forms

This post was written by Carole Zangari

8 Comments

Hi! I’m a school-based SLP working with kids on a wide spectrum of speech and language skills. I agree with all your tips. I make & use similar data sheets for some of my students. I collaborate with the teachers of our students with Autism on designing & implementing our data collection sheets, making sure we have the same definition for each skill. I also agree that we should not take data every session, for the same reason you stated. The problem with working in a school setting is that we are required by our district to take data every session. “If it’s not documented, it didn’t happen.” It’s not enough to document what you did & for how long, our data needs to be specific to the goals. We also need to take data for Medicaid purposes. So, when I have a group of four students working on different, sometimes similar, goals, I feel like all I do is take data. I know that this feeling is shared by many SLPs. I love working with elementary school students, but it certainly is very challenging!

Jessie, I’ve been in some places where they take data every session but not on every goal. That makes it a little easier. The over-emphasis on assessment mean there is less time/energy for instruction. With less time for instruction, progress is hindered and the data are less than stellar. Seems like we need to do something to break the cycle! Thanks so much for stopping by with your comment.

Really like your thoughts on data collection. It’s nice to have these concepts explained so clearly. I will definitely share these with my student interns!

Thanks, Vicki! 🙂

Love this Post! (and your whole blog really…) I’m a CDA working under an AAC Team at a Special Needs school, we use a similar data sheet. I’m curious how you differentiate between Full Physical prompt, and No Response?

Janelle, I generally use NR when we determine not to use full physical prompt. It doesn’t always make sense to follow up a partial prompt with full physical assist. E.g., a client learning word prediction who selected the wrong option or a student who drew an incorrect conclusion in an inferencing activity. We also use it for clients who resist full physical prompting and become agitated or embarrassed by it. Hope that makes sense. Thanks so much for stopping by and for the kind words.

OK. Very nice information, thanks for sharing. One question that I noted in your last response. How would you document NR vs. Incorrect responses? In your example of a Word Prediction response that was incorrect, wouldn’t it be more useful to know what he chose instead, than only have it listed as NR? Again, biting off too much, is such a risk, but not having the correct Data is also not useful. How do you document this kind of activity?

Lezli, thanks for your comments and questions. You are SO right that we have to find a balance. In my experience, we tend to take more data than we actually use and I think the more performance data we attempt to record, the less accurate the data tend to be. Of course, that is not always the case, but it worries me when people say they do all their paperwork at the end of the day/week because they have too much to track during the session. Hindsight may be good for some things but data collection probably isn’t one of them. It’s an individual thing, of course, and there are lots of ‘right’ ways to do it. If someone CAN take a lot of (accurate) data and if they actually use it to determine how to adjust their therapy, then the system makes sense and gets two thumbs up from us. In terms of NR vs Incorrect, it depends on whether there is a response. Sometimes clients don’t attempt a response, which would be coded as NR. If they responded and were wrong, then it would get Incorrect. For example, if I am teaching someone to type 3 letters BEFORE checking WP options and they check after every letter instead, that would be coded as incorrect, if what I am teaching is to use a more efficient WP process. It’s not as much about whether they got that particular item right or wrong, as it is about whether they are learning the new-and-improved, way-more-efficient process I am trying to teach. We wouldn’t use that approach with every client, though. Sometimes (often, in fact) it is about picking the correct WP option. When clients are able to do that but using a very slow, labor-intensive process that I would teach guidelines (like typing 3 letters before checking WP). Thanks again for stopping by to comment!